This article will guide you how to automate the process to deploy an HPC environment on Azure, using an open source project AzureHPC.

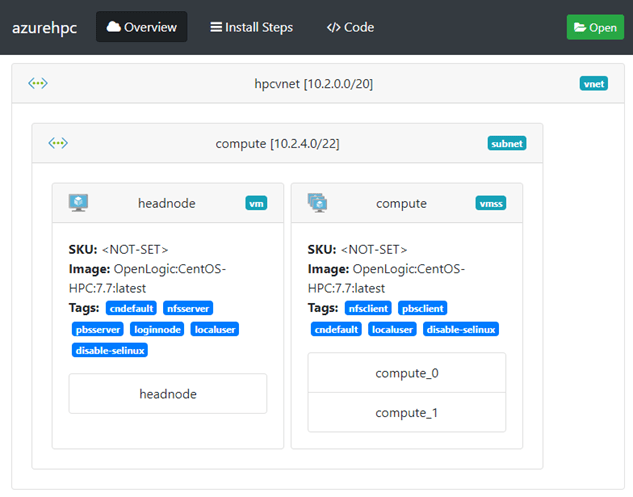

The environment will look like below:

- A Virtual Network named “hpcvnet" and a subnet named “compute".

- A Virtual Machine named “headnode":

- PBS job scheduler

- 2TB NFS file system

- A Virtual Machine Scale Set named “compute", which contains 2 instances.

Prerequisites:

- A Linux environment, Windows 10 中的 WSL 2.0 as example with Azure CLI installed。Or you can just use CloudShell of Azure Portal.

- An Azure subscription with sufficient quota including:

- 1xDS8_v3 (8 cores)

- 2xHC44rs (88 cores)

Step-by-step:

- Download AzureHPC repo.

# log in to your Azure subscription

$ az login

# mkdir your working environment

$ mkdir airlift

$ cd airlift

# Clone the AzureHPC repo

$ git clone https://github.com/Azure/azurehpc.git

$ cd azurehpc

# Source the install script

$ source install.sh

There are many HPC templates in the /examples folder. Just change directory to the template folder and edit the config.json file.

2. Edit config.json.

# We will use /examples/simple_hpc_pbs template here

$ cd examples/simple_hpc_pbs

# edit config.json

# vi config.json

$ code .

Input values of “location", “resource_group", and “vm_type". You can also change network or storage settings as your desire.

3. Deploy the HPC environment.

$ azhpc-build

It should be completed around 10~15 mins.

4. Connect to “headnode" to check the HPC environment you just deployed.

Please note that /share is mounted, and /apps, /data, and /home directories are exported and accessible from PBS nodes. You can “ssh <PBSNODENAME>" to verify.

$ azhpc-connect -u hpcuser headnode

Fri Jun 28 09:18:04 UTC 2019 : logging in to headnode (via headnode6cfe86.westus2.cloudapp.azure.com)

$ pbsnodes -avS

$ df -h

5. As PBS is well installed and configured on “headnode", you can submit jobs and check jobs status.

$ qstat -Q

6. Delete the environment.

$ azhpc-destroy

Or just delete the whole resource group from Azure portal.